In one line: Not that Subagents aren’t enough — it’s the moment Context handoff costs exceed rebuild costs.

At 2 AM during the early-April long weekend, I had five Claude Code sessions open simultaneously on my MacBook. Each one was doing something different — one was organizing the page structure for a knowledge base, another was designing a new Agent architecture, another was running an environment migration script — and I was switching between them, manually pasting the output of one session into the context of another, then extracting a snippet from that session’s reply and pasting it back into yet another, like some terribly inefficient human routing protocol.

By the third time I was reorganizing the same “we previously decided X, because of Y, and next we need to do Z” context from one session to another, I stopped. Because I suddenly realized something: I wasn’t using AI to get things done — I was working as an AI Context moving crew, and the cost of that hauling had gradually exceeded the cost of just letting a session start from scratch.

When I wrote the Skill vs Subagent piece earlier, I mentioned that the flow of Context determines whether you should use a Skill or a Subagent. And in the Agent Team theory article, I also explained why the hub-and-spoke Subagent model hits a ceiling when tasks require multiple Agents to collaborate[7]. Now that we know the theory — the reasoning behind “how things should work” — it’s time to actually look at “how things really work.”

Three Reasons I Decided to Build It

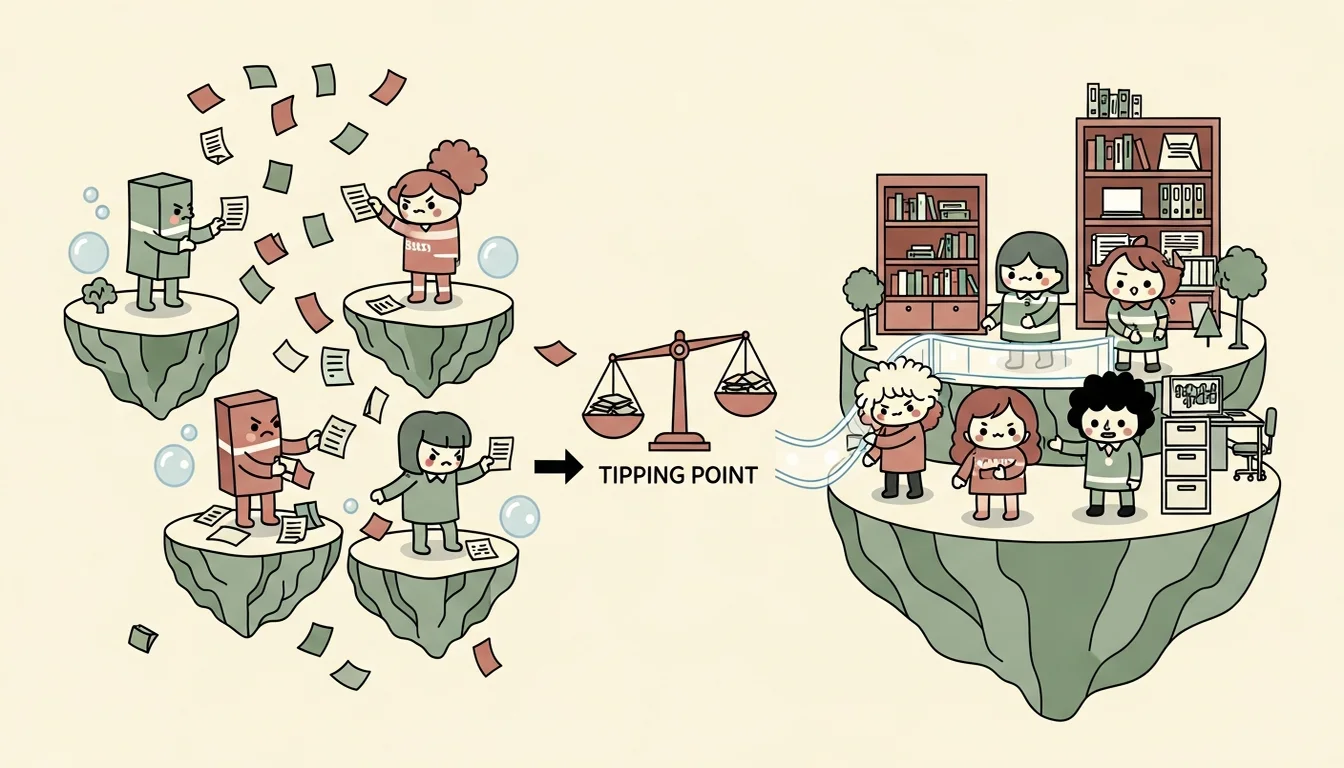

The Subagent model works great within a single session. The Parent Agent spawns a Subagent to handle a task, it finishes and brings the results back, Context flows naturally back to the parent — no human intervention needed[7]. But when work spans multiple sessions, multiple time periods, or even multiple machines, three problems start compounding.

The first is that Context doesn’t persist. When a Claude Code session ends, all the conversation history, all the decision-making context, all the understanding of intermediate outputs accumulated in that session — it all vanishes the next time you open a new session. You need to re-explain everything to the AI (this is the most fundamental challenge across Phase 1 through Phase 5 in the mindset series). If you’re only opening one or two sessions a day for simple tasks, this isn’t a problem. But when you’re simultaneously pushing five or six interrelated workflows, each spanning several days, you’ll find that the time you spend “getting the AI to understand the current situation” has exceeded the time you spend “getting the AI to do actual work.”

The second is that identity isn’t independent. A Subagent inherits the parent’s working directory and CLAUDE.md. It doesn’t know “who it is” — it’s just a temporary clone of the parent that disappears once done. This is fine for simple tasks. But when you need one Agent dedicated to knowledge management and another dedicated to asset cataloging, each with its own working methods and domain expertise, the Subagent’s “temp worker” identity isn’t enough. What you need are “full-time employees” with fixed identities, fixed responsibilities, and fixed tools — and that’s the starting point for Multi Agent/Agent Teams.

The third is that cross-session communication doesn’t exist. If Agent A makes a decision in one session and Agent B needs to know about it in another session, the common approach today is to copy that decision out of A’s session and paste it into B’s session to continue the conversation, or write it to a file for B to read. This is why when Claude Code launched the Agent Teams feature, they designed a mechanism for multi-session communication via mailbox[10]. But that feature was designed for “multiple workers in the same project” — like three people reviewing the same PR together. What I needed was “multiple specialists with independent identities and expertise,” each Agent with its own directory, its own CLAUDE.md, its own Skills. That’s a fundamentally different starting assumption from what Agent Teams was designed for (this distinction will be explored in detail in later articles).

These three problems together form a tipping point: the cost of continuing with the Subagent model had exceeded the cost of redesigning an entirely new collaboration architecture.[8]

HANDOFF.md: The First Output Wasn’t Code

After deciding to build an Agent Team, the first thing I did wasn’t writing code, nor drawing architecture diagrams — it was writing a HANDOFF.md.

The content of this document was simple: who I am, what I’d been working on, where things currently stand, what the next steps are, and which past decisions shouldn’t be overturned. I started here because I knew exactly what was about to happen: this was going to be a multi-tasking Agent team that needed to work across platforms, across machines, across repos. I’d be opening multiple sessions, each responsible for a different aspect, and every new session would start with a blank slate — no memory of what came before. This is the Memory problem that Agent workflows constantly have to deal with.

The HANDOFF.md I wrote was the most primitive solution to this problem. No framework, no infrastructure required — just a plain-text MD file that clearly states “here’s the current state,” so the next Agent can read it and pick up where things left off[6].

The actual process went roughly like this: when the first session ended, I spent a minute writing up the work goals and plans that had accumulated in Claude Code’s memory into a document, saved to a shared directory. When the second session started, my first message was “read HANDOFF.md, know what we’re doing.” After reading it, the Agent knew the background context, knew which decisions had already been made, knew what to do next — no need for me to spend twenty minutes explaining everything again.

Sounds primitive, right? After all, there are so many Memory Solutions on the market today. But this is precisely something I validated repeatedly throughout the entire building process: the core of an Agent Team isn’t complex communication protocols or elegant architecture design — it’s turning “implicit knowledge in a human’s head” into “plaintext that Agents can read.” This sounds simple but takes far more time than you’d expect, because you have to write down even the things you think are “isn’t this obvious?” The JIT loading principle from the Context Engineering article takes on an entirely different practical significance here[1]. That’s also why I decided to defer the Agent Team’s Memory mechanism until the entire framework was more mature — at this point, HANDOFF.md was enough.

Architecture Isn’t Designed — It Grows

After writing HANDOFF.md, the next step wasn’t designing a complete Agent Team architecture — how many Agents, what each one does, how they communicate, how they coordinate. That’s the idealized scenario. An Agent Team born for Operations should emerge from Operations, so architecture should grow out of needs, one piece at a time.

The first to emerge was Agent Em, because every session involved discussing prior decisions and knowledge, but that knowledge was scattered across different conversation logs with nobody systematically organizing it. I needed an Agent dedicated to “structuring knowledge.” It does nothing else — just ingest, query, lint — transforming raw conversations and documents into searchable wiki pages.

The second to emerge was Agent C7, because as Em’s wiki grew richer, I started needing to manage Skills, Commands, MCP Servers, and other executable assets. These assets needed someone to handle cataloging, indexing, quality validation, and provisioning. You can’t manually copy Skills every time, right? So I designed C7 as the asset manager.

The third was Agent Dm, because when I needed to create new Agents or handle new projects, the process of designing and building Agents or organizing/managing projects was repetitive (reading wiki knowledge, designing architecture, creating scaffolds, validating compliance). This process itself could be standardized. Dm’s three-role division (Architect using Opus for thinking and design, Scaffolder using Sonnet for execution and building, Validator using Sonnet for compliance verification) grew directly from this standardization need.

The fourth was Agent G7, because as Layer 3 worker Agents started multiplying, someone needed to manage their registration, task assignment, and status tracking. That shouldn’t be done manually by a human, nor should it be a side job for other Specialist Agents, because fleet management is an independent responsibility in its own right.

Finally came GM, the Lead Agent — the general manager. It was the last to take shape in the Agent Team, for a simple reason: before the other four Agents were built, GM’s responsibilities were ones I’d been handling myself. I was the one switching between sessions, deciding who should do what, hauling Context from here to there. GM’s design was essentially mapping a set of fact-based questions: “Of all these coordination tasks I’m doing manually, which can be systematized? Which can be automated? Which can be taken over by an Agent? Which require human intervention?”

Looking back at this process, the architecture ultimately grew into three layers:

- Layer 1 (Leader): GM, the sole human interface, responsible for conversation, insight extraction, and coordinating Layer 2

- Layer 2 (Specialists): Em, C7, Dm, G7, each with independent identity, directory, and Skills, responsible for specific domains

- Layer 3 (Workers): Work Agents designed by Dm and managed by G7, responsible for actual project execution

Leader Agent"] GM --> Em["Em

Knowledge"] GM --> C7["C7

Assets"] GM --> Dm["Dm

Builder"] GM --> G7["G7

Fleet Mgr"] G7 --> W1["Worker 1"] G7 --> W2["Worker 2"] G7 --> W3["Worker N"] Dm -.->|design & build| W1 Dm -.->|design & build| W2 Em -.->|knowledge| Dm C7 -.->|provision skills| Dm style H fill:#c67a50,color:#fff style GM fill:#6b8f71,color:#fff style Em fill:#58a6ff,color:#fff style C7 fill:#58a6ff,color:#fff style Dm fill:#58a6ff,color:#fff style G7 fill:#58a6ff,color:#fff style W1 fill:#7c6db5,color:#fff style W2 fill:#7c6db5,color:#fff style W3 fill:#7c6db5,color:#fff

But these three layers weren’t drawn on a whiteboard. They were added one by one over the course of seven days of building, each time I hit a moment of “nobody is responsible for this.”

References

[1] Anthropic — Effective Context Engineering for AI Agents https://www.anthropic.com/engineering/effective-context-engineering-for-ai-agents

[6] Black Dog Labs — Claude Code Decoded: The Handoff Protocol (handoff compresses 10,000+ tokens to 1,000-2,000 tokens) https://blackdoglabs.io/blog/claude-code-decoded-handoff-protocol

[7] Rick Hightower — Claude Code Subagents and Main-Agent Coordination: A Complete Guide (limitations of the subagent hub-and-spoke model) https://medium.com/@richardhightower/claude-code-subagents-and-main-agent-coordination-a-complete-guide-to-ai-agent-delegation-patterns-a4f88ae8f46c

[8] Towards Data Science — Why Your Multi-Agent System is Failing: Escaping the 17x Error Trap (DeepMind research: unstructured “bag of agents” produces 17.2x error amplification; partial counterpoint: emphasizes need for upfront architectural planning) https://towardsdatascience.com/why-your-multi-agent-system-is-failing-escaping-the-17x-error-trap-of-the-bag-of-agents/

[10] Claude Code Agent Teams official documentation https://code.claude.com/docs/en/agent-teams

Support This Series

If these articles have been helpful, consider buying me a coffee